AI Deploy - Tutorial - Build & use a Streamlit image

AI Deploy is covered by OVHcloud Public Cloud Special Conditions.

Objective

Streamlit is a python framework that turns scripts into shareable web application.

The purpose of this tutorial is to provide a concrete example on how to build and - On the use a custom Docker image for a Streamlit applications.

Requirements

- an AI Deploy project created inside a Public Cloud project

- a user for AI Deploy

- Docker installed on your local computer

- some knowledge about building image and Dockerfile

Instructions

Write a simple Streamlit application

Create a simple python file with name simple_app.py.

Inside that file, import your required modules:

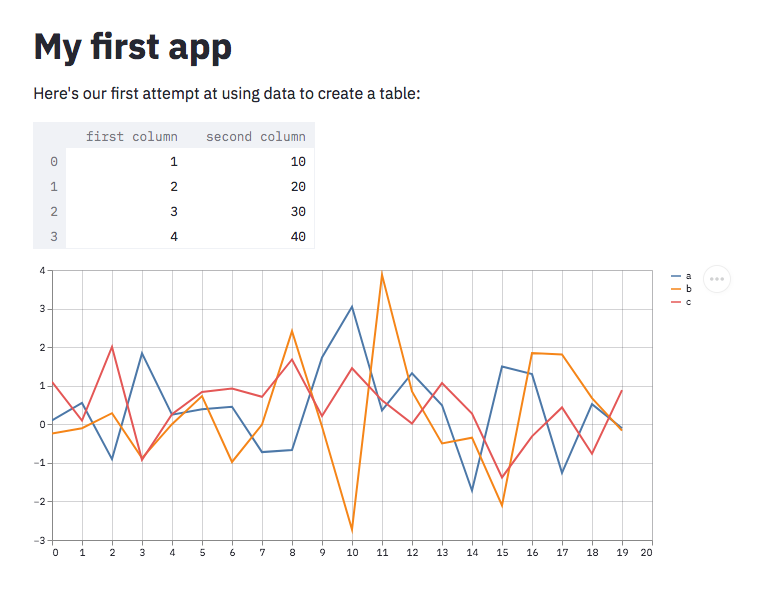

Display all information you want on your Streamlit application:

- More information about Streamlit capabilities can be found here

- Direct link to the full python file can be found here here

Write the Dockerfile for your application

Your Dockerfile should start with the FROM instruction indicating the parent image to use. In our case we choose to start from a classic python image.

Install your needed python module using a pip install ... command. In our case we only need these 2 modules:

- streamlit

- pandas

Install your application inside your image. In our case, we just copy our python file inside the /opt directory.

Define your default launching command to start the application:

In order to access the app from the outside world, don't forget to add the --server.address=0.0.0.0 instruction on your streamlit run ... command. By doing this you indicate to the process that it have to bind on all network interfaces and not only the localhost.

Create the home directory of the ovhcloud user (42420:42420) and give it correct access rights:

This last step is mandatory because Streamlit needs to be able to write inside the HOME directory of the owner of the process in order to work properly.

- More information about Dockerfiles can be found here

- Direct link to the full Dockerfile can be found here here

Build the Docker image from the Dockerfile

From the directory containing your Dockerfile, run one of the following commands to build your application image:

-

The first command builds the image using your system’s default architecture. This may work if your machine already uses the

linux/amd64architecture, which is required to run containers with our AI products. However, on systems with a different architecture (e.g.ARM64onApple Silicon), the resulting image will not be compatible and cannot be deployed. -

The second command explicitly targets the

linux/AMD64architecture to ensure compatibility with our AI services. This requiresbuildx, which is not installed by default. If you haven’t usedbuildxbefore, you can install it by running:docker buildx install

The dot . argument indicates that your build context (place of the Dockerfile and other needed files) is the current directory.

The -t argument allows you to choose the identifier to give to your image. Usually image identifiers are composed of a name and a version tag <name>:<version>. For this example we chose streamlit-example:latest.

Test it locally (optional)

Launch the following docker command to launch your application locally on your computer:

The -p 8501:8501 argument indicates that you want to execute a port rediction from the port 8501 of your local machine into the port 8501 of the docker container. The port 8501 is the default port used by streamlit applications.

Don't forget the --user=42420:42420 argument if you want to simulate the exact same behavior that will occur on AI Deploy apps. It executes the docker container as the specific OVHcloud user (user 42420:42420).

Once started, your application should be available on http://localhost:8501.

Push the image into the shared registry

The shared registry should only be used for testing purposes. Please consider creating and attaching your own registry. More information about this can be found here. The images pushed to this registry are for AI Tools workloads only, and will not be accessible for external uses.

Find the address of your shared registry by launching this command:

Login on the shared registry with your usual AI Platform user credentials

Push the compiled image into the shared registry:

Launch the AI Deploy app

The following command starts a new app running your Streamlit application:

--default-http-port 8501 indicates that the port to reach on the app URL is the 8501.

--cpu 1 indicates that we only request 1 CPU for that app.

Consider adding the --unsecure-http attribute if you want your application to be reachable without any authentication.

Once the AI Deploy app is running you can access your Streamlit application directly from the app's URL.

Go further

- Do you want to use Streamlit to deploy an AI model for audio classification task? Here it is.

- You can imagine deploying an AI model with an other tool: Flask. Refer to this tutorial.

If you need training or technical assistance to implement our solutions, contact your sales representative or click on this link to get a quote and ask our Professional Services experts for a custom analysis of your project.

Feedback

Please send us your questions, feedback and suggestions to improve the service:

- On the OVHcloud Discord server