How to Configure Your NIC for OVHcloud Link Aggregation in SLES 15

Objective

OVHcloud Link Aggregation (OLA) technology is designed by our teams to increase your server's availability, and boost the efficiency of your network connections. In just a few clicks, you can aggregate your network cards and make your network links redundant. This means that if one link goes down, traffic is automatically redirected to another available link. The available bandwidth is also doubled thanks to aggregation. Aggregation is based on IEEE 802.3ad, Link Aggregation Control Protocol (LACP) technology.

This guide explains how to bond your interfaces to use them for OLA in SLES 15.

Requirements

OVHcloud Control Panel Access

- Direct link: Dedicated Servers

- Navigation path:

Bare Metal Cloud>Dedicated servers> Select your server

Instructions

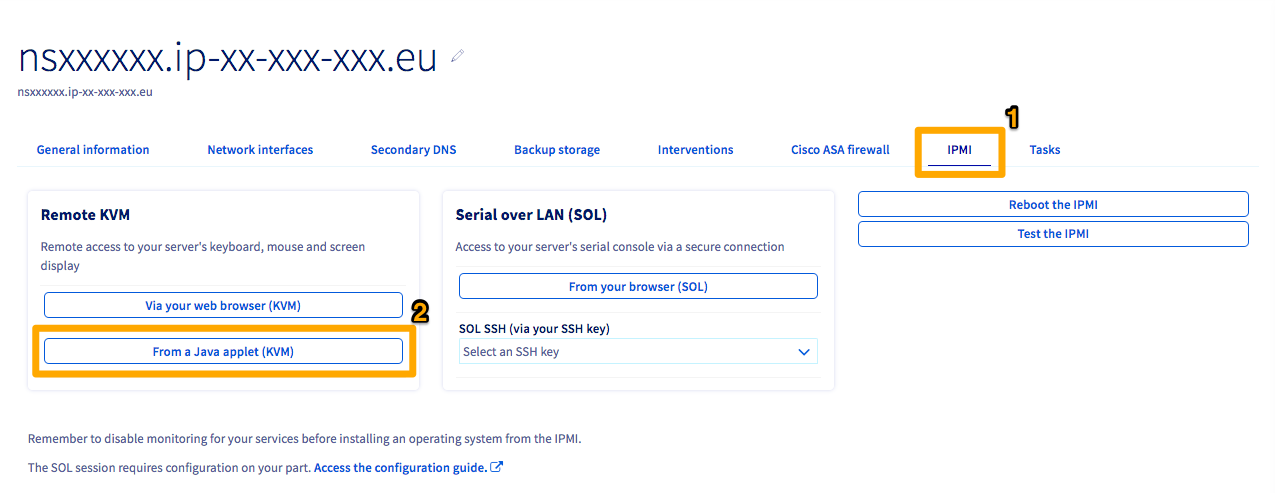

Because you have a private-private configuration for your NICs in OLA, you will be unable to SSH into the server. Thus, you will need to leverage the IPMI tool to access the server.

Click the IPMI tab (1).

Next, click the From a Java applet (KVM) button (2).

A JNLP program will be downloaded. Open the program to enter the IPMI. Log in using valid credentials for the server.

By default, using an OVHcloud template, the NICs will be named eth0 and eth1. If you are not using an OVHcloud template, you can find the names of your interfaces using the following command:

The values (MAC addresses, IP addresses, etc.) shown in the configurations and examples below are provided as examples. Of course, you must replace these values with your own.

Retrieving MAC addresses

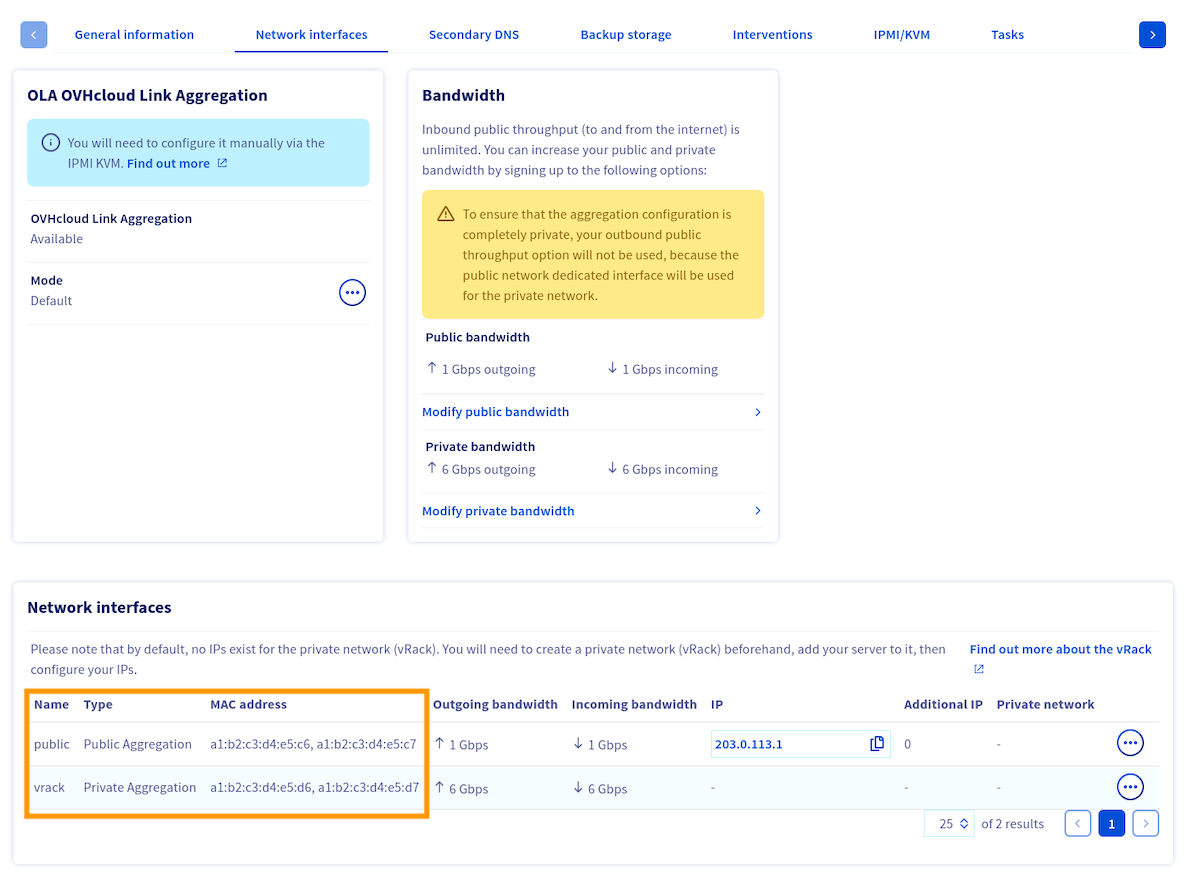

Switch to the tab Network Interfaces and take note of the MAC addresses for each interface (public/private) which are displayed at the bottom of the menu.

Please note that the MAC address of the main public interface is the one receiving DHCP offers, both in the server's operating system and in rescue mode. This interface handles public connectivity in the default configuration.

Additionally, the MAC address of the main private interface is the one with the lowest value. In the example image above, this is the address a1:b2:c3:d4:e5:d6.

Now that you know which MAC addresses are associated to each type (public/private) of interface, you need to retrieve the interface names.

Retrieving interface names

If you lose network connection to your server, follow the "Open KVM" steps from this guide.

To retrieve the names of the interfaces, execute the following command:

This command will yield numerous interfaces. If you are having trouble determining which ones are your physical interfaces, the first interface will still have the server's public IP address attached to it by default.

Here's an output example:

Once you have determined the names of your interfaces, you can configure interface bonding in the OS.

Configuring interface bonding

Select the tab below that matches your server configuration:

- Two interfaces: Advance servers with two physical NICs.

- Four interfaces - Double LAG: Scale and High-Grade servers with OLA in Active - Double LAG mode (public + private aggregates). This requires OLA to be enabled in the OVHcloud Control Panel.

- Four interfaces - Fully Private: Scale and High-Grade servers with OLA in Active - Fully Private mode (single private aggregate for vRack). This requires OLA to be enabled in the OVHcloud Control Panel.

Create the bond configuration file /etc/sysconfig/network/ifcfg-bond0:

Static IP

Then configure each physical interface. Edit /etc/sysconfig/network/ifcfg-ens22f0np0:

Create /etc/sysconfig/network/ifcfg-ens22f1np1:

DHCP

The physical interface configuration files remain the same as above.

This configuration bonds public interfaces into bond0 (with public IP) and private interfaces into bond1 (for vRack).

Create the public bond configuration file /etc/sysconfig/network/ifcfg-bond0:

Static IP

Create the private bond configuration file /etc/sysconfig/network/ifcfg-bond1:

Then configure each physical interface. Edit /etc/sysconfig/network/ifcfg-ens22f0np0:

Create /etc/sysconfig/network/ifcfg-ens22f1np1:

Create /etc/sysconfig/network/ifcfg-ens33f0np0:

Create /etc/sysconfig/network/ifcfg-ens33f1np1:

DHCP (bond0 only)

For the public bond, use DHCP:

The private bond (ifcfg-bond1) and all physical interface configuration files remain the same as above.

This configuration aggregates all physical interfaces into a single bond for vRack use only. There is no public IP connectivity.

Following the implementation of OLA in Fully Private mode, the public IP is no longer accessible. Make sure you have an alternative means of access (e.g. through another server in the vRack, or via KVM/IPMI) before applying this configuration.

Create the bond configuration file /etc/sysconfig/network/ifcfg-bond0:

Then configure each physical interface. Edit /etc/sysconfig/network/ifcfg-ens22f0np0:

Create /etc/sysconfig/network/ifcfg-ens22f1np1:

Create /etc/sysconfig/network/ifcfg-ens33f0np0:

Create /etc/sysconfig/network/ifcfg-ens33f1np1:

In Fully Private mode, the bond uses the MAC address of the main private interface. The IPADDR field should be set to your vRack private IP.

Applying the configuration

Apply the configuration by reloading all interfaces with wicked:

This may take several seconds since it is building the bond interface. To test that the bond is working, ping another server on the same vRack. If it works, you are all set. If it does not, double-check your configurations or try rebooting the server.

You can also verify the bonding parameters using the following command:

Go further

Configuring OVHcloud Link Aggregation in the OVHcloud Control Panel

How to configure your NIC for OVHcloud Link Aggregation in Debian 12 or Ubuntu 24.04 using Netplan

How to configure your NIC for OVHcloud Link Aggregation in Debian 9 to 11

How to Configure Your NIC for OVHcloud Link Aggregation in Windows Server 2019

Join our community of users.