AI Endpoints - Integration with Apache Airflow

AI Endpoints is covered by the OVHcloud AI Endpoints conditions and the OVHcloud Public Cloud special conditions.

New integration available: We're excited to announce a new integration for AI Endpoints with Apache Airflow. This integration allows you to seamlessly orchestrate AI workloads on OVHcloud infrastructure directly from your Airflow DAGs, and continues our commitment to integrating AI Endpoints into as many open-source tools as possible to simplify its usage.

Objective

OVHcloud AI Endpoints allows developers to easily add AI features to their day-to-day developments.

In this guide, we will show how to use Apache Airflow to integrate OVHcloud AI Endpoints into your workflow orchestration pipelines.

With Apache Airflow's powerful workflow management capabilities and OVHcloud's scalable AI infrastructure, you can programmatically author, schedule, and monitor AI-powered workflows with ease.

Definition

- Apache Airflow: An open-source platform to programmatically author, schedule, and monitor workflows. Airflow allows you to define complex workflows as Directed Acyclic Graphs (DAGs) using Python, making it ideal for orchestrating data pipelines, AI workloads, and automated tasks.

- AI Endpoints: A serverless platform by OVHcloud providing easy access to a variety of world-renowned AI models including Mistral, LLaMA, and more. This platform is designed to be simple, secure, and intuitive with data privacy as a top priority.

Why is this integration important?

This new integration offers you several advantages:

- Workflow Orchestration: Schedule and monitor AI tasks as part of your data pipelines.

- Scalability: Leverage Airflow's distributed architecture for parallel AI processing.

- Reliability: Built-in retry mechanisms and error handling for production workflows.

- Flexibility: Combine AI tasks with other data operations in unified workflows.

- Observability: Monitor AI task execution through Airflow's rich UI and logging.

- Models: All of our models are available through the Airflow provider.

Requirements

Before getting started, make sure you have:

- An OVHcloud account with access to AI Endpoints.

- Python 3.8 or higher installed.

- Apache Airflow 2.3.0 or higher installed.

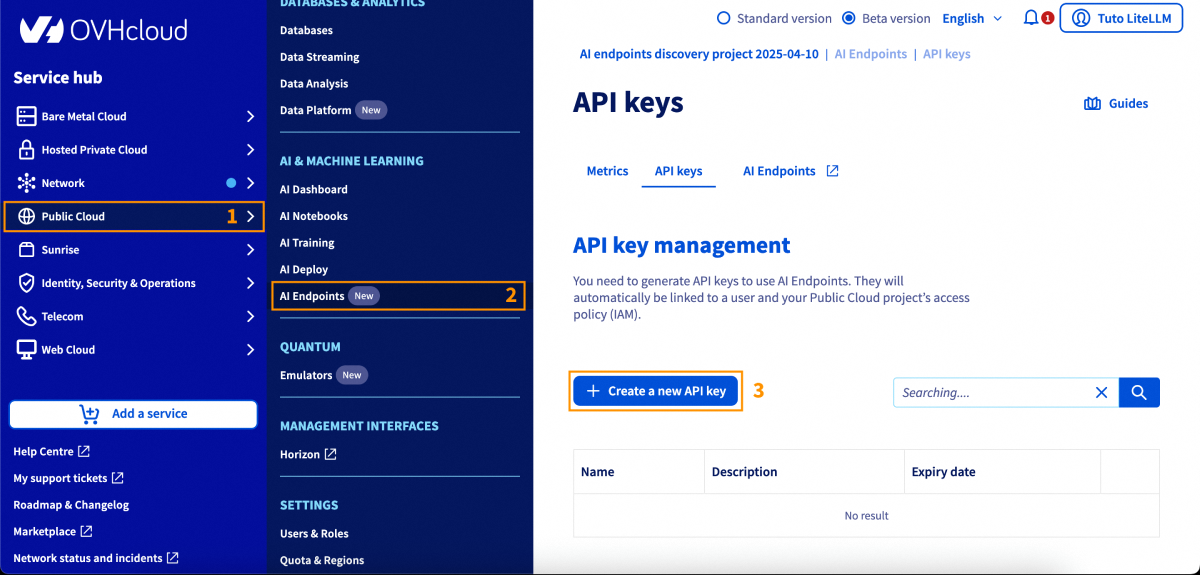

- An API key generated from the OVHcloud Control Panel, in the

Public Cloudsection >AI Endpoints>API keys.

Instructions

Installation

Install the OVHcloud AI Endpoints provider for Apache Airflow using pip:

You are now ready to get started.

Basic configuration

Setting up Airflow connection

The recommended method to configure your API key is using Airflow connections. You can create a connection through the Airflow UI or CLI.

Using Airflow UI:

- Go to Admin > Connections.

- Click the + button to add a new connection.

- Fill in the details:

| Attribute | Value |

|---|---|

| Connection Id | ovh_ai_endpoints_default |

| Connection Type | generic |

| Password | Your OVHcloud AI Endpoints API key |

Using Airflow CLI:

Basic usage

Here's a simple usage example for generating text with Large Language Models:

Advanced features

Dynamic content with Jinja templating

Use Airflow's Jinja templating for dynamic content in your AI tasks:

Trigger this DAG with configuration:

Embeddings

Create vector embeddings for semantic search and similarity matching:

Batch embeddings

Process multiple texts in a single operation:

Task output and XCom

Access operator outputs in downstream tasks using Airflow's XCom feature:

Error handling and retries

Configure retries and error handling for production workflows:

Parallel processing

Run multiple AI tasks in parallel to maximize throughput:

Go further

You can find more information about Apache Airflow on their official documentation. You can also browse the AI Endpoints catalog to explore the models that are available through the Airflow provider.

For detailed information about the provider, including additional operators and advanced features, visit the OVHcloud Apache Airflow Provider documentation.

Browse the full AI Endpoints documentation to further understand the main concepts and get started.

If you need training or technical assistance to implement our solutions, contact your sales representative or click on this link to get a quote and ask our Professional Services experts for a custom analysis of your project.

Feedback

Please feel free to send us your questions, feedback, and suggestions regarding AI Endpoints and its features:

- In the #ai-endpoints channel of the OVHcloud Discord server, where you can engage with the community and OVHcloud team members.

- On the GitHub repository for bug reports and contributions.