AI Deploy - Getting started

AI Deploy is covered by OVHcloud Public Cloud Special Conditions.

Objective

OVHcloud provides a set of managed AI tools designed for building your Machine Learning projects.

This guide explains how to get started with OVHcloud AI Deploy, covering the deployment of your first application using either the Control Panel (UI) or the ovhai Command-Line Interface.

Requirements

- A Public Cloud project in your OVHcloud account

OVHcloud Control Panel Access

- Direct link: Public Cloud Projects

- Navigation path:

Public Cloud> Select your project

Instructions

Subscribe to AI Deploy

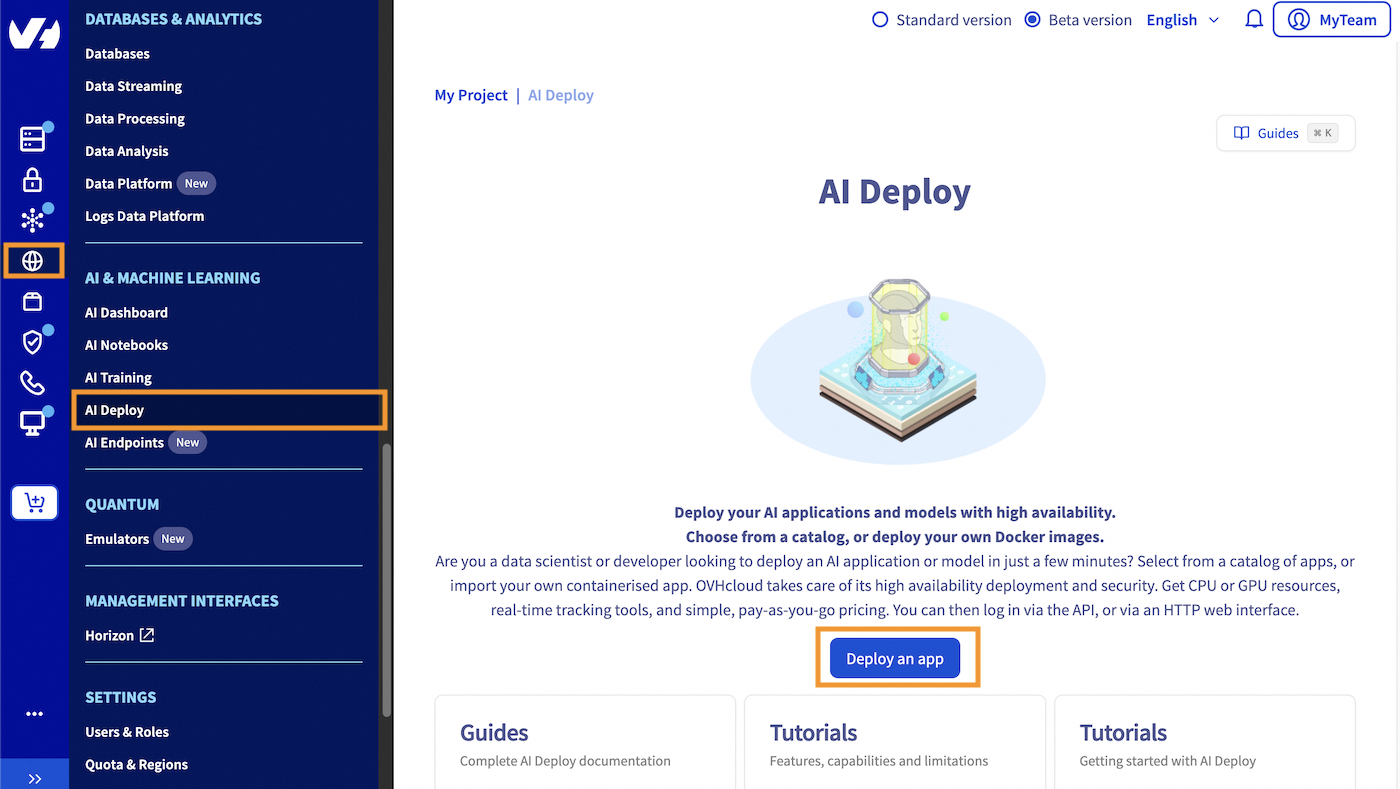

Click this link to access your Public Cloud project, then go to the AI & Machine Learning category in the left menu and choose AI Deploy.

Click on the Deploy an app button and accept the terms and conditions if any.

Once clicked, you will be redirected to the creation process detailed below.

Deploying your first application

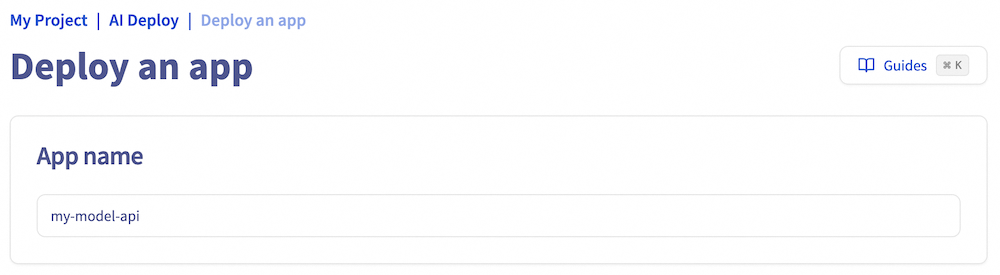

Step 1: Name your application

First, choose a name for your AI Deploy app, or accept the automatically generated name if it meets your needs, to make it easier to manage all your apps.

Step 2: Select the location

Select where your AI Deploy app will be hosted, meaning the physical location.

OVHcloud provides multiple datacenters. You can find the capabilities for AI Deploy in the guide AI Deploy capabilities.

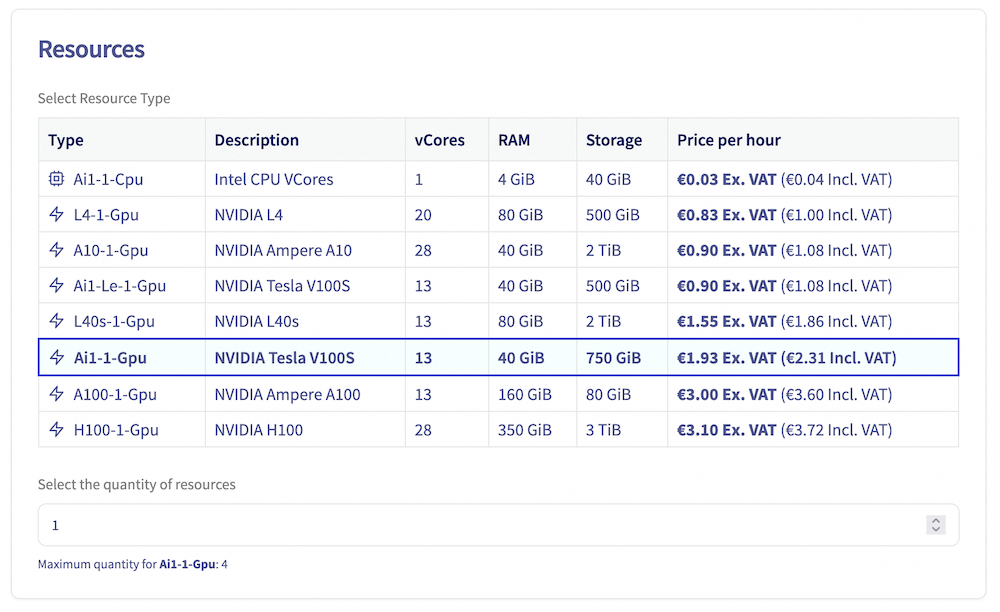

Step 3: Assign compute resources

To deploy an AI Deploy app, you must allocate compute resources. The app supports a range of resource configurations:

- GPU Resources: 1 to 4 GPUs

- CPU Resources: 1 to 12 CPUs

Note that each instance is billed based on its running time.

Each compute resource includes:

- CPU or GPU cores: Processing power for your app

- RAM: Memory of your app

- Local Storage: Storage space for your app

You can adjust the Resource Size to customize the allocation of CPU or GPU cores, RAM, and local storage to meet your app's specific needs.

Step 4: Select the application to deploy

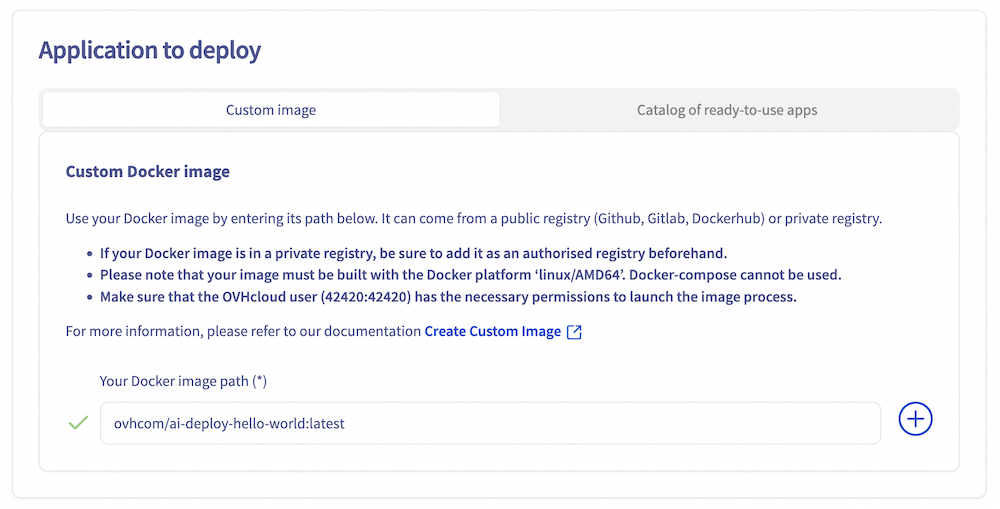

AI Deploy allows a user to deploy applications from two sources:

- From your own Docker image, giving you the full flexibility to deploy what you want. This image can be stored on many types of registry (OVHcloud Managed Private Registry, Docker Hub, GitHub packages, ...) and the expected format is

<registry-address>/<image-identifier>:<tag-name>. - From an OVHcloud catalog with already built-in AI models and applications.

In this tutorial, we will use a demonstration OVHcloud Docker image to deploy your first AI Deploy app. The objective is to deploy and call a simple Flask API which will welcome you by sending back Hello followed by the name you sent in your request. There is no web interface. What is given is an API endpoint that you can reach via HTTP.

If you want to deploy your own image, you need to comply with a few rules like adding a specific user. Follow our Build and use custom images guide. You might also be interested in Using and managing your public and private registries.

To use this demonstration OVHcloud docker image, enter the following name as the Custom Docker image: ovhcom/ai-deploy-hello-world. Then click on the + button to confirm.

You can find this image on the OVHcloud DockerHub. For more information about this Docker image, please check the GitHub repository.

Step 5: Assign compute resources and specify scaling strategy

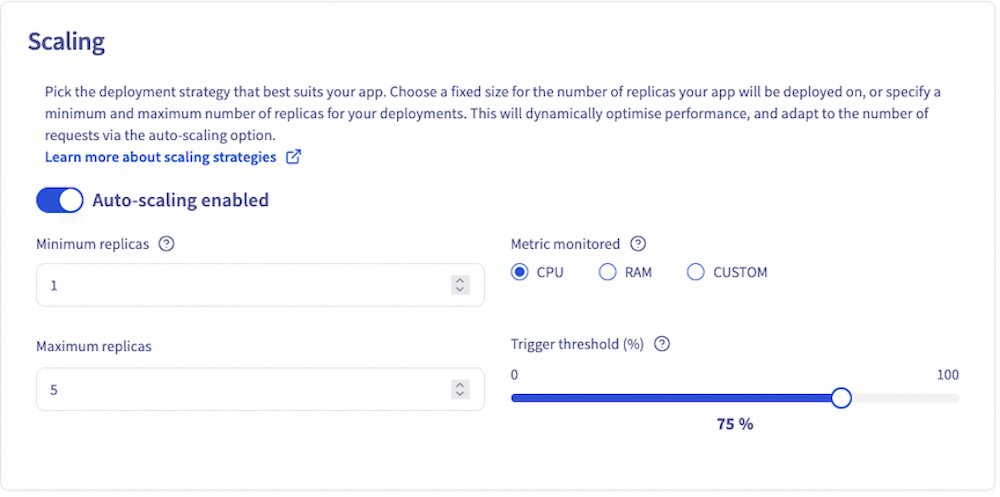

Then you can modify the Number of replicas on which your AI Deploy app will be deployed, according to a scaling strategy.

The static scaling strategy allows you to choose a fixed number of replicas on which the app will be deployed. For this method, the minimum number of replicas is 1 and the maximum is 10. This strategy is useful when your consumption or inference load is fixed. Moreover, it allows you to have fixed costs.

With the autoscaling strategy, it is possible to choose both the minimum number of replicas (1 by default) and the maximum number of replicas. High availability will measure the average resource usage across its replicas and add instances if this average exceeds the specified average usage percentage threshold. Conversely, it will remove instances when this average resource utilisation falls below the threshold. You can even downscale to 0 if you have no usage, thereby limiting costs. The monitored metric can either be CPU or RAM, or a custom metric. This solution might be better if you have irregular or sawtooth inference loads.

For more detailed information about scaling strategies, please refer to our dedicated guide: AI Deploy - Scaling strategies.

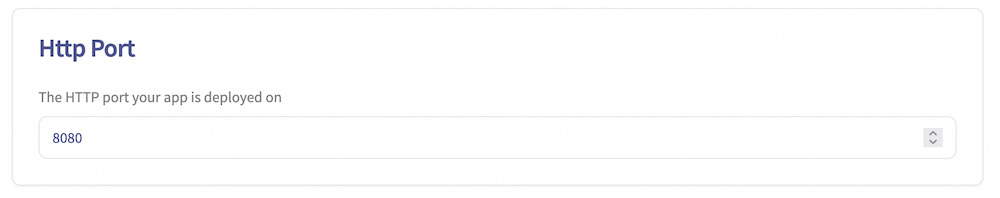

Step 6: HTTP default port

The default HTTP exposed port for your app's URL is 8080. However, if you are using a specific framework that requires a different port, you can override the default port and configure your application to use the desired alternative port.

Step 7: Access rule

Next parameter to set is the Confidentiality. You can opt for public access (open to the internet) or restricted access.

Public access means that everyone is authorized. Use this option carefully. Usually public access is used for tests, but not in production since everyone will be able to use your app.

On the other hand, a secured access will require credentials to access the app. Two options are available in this case:

- An AI Platform user. It can be seen as a user and password restriction. Quite simple but not a lot of granularity.

- An AI token (preferred solution). A token is very effective since you can link them with labels. This will be explained in Step 8.

We will select Restricted Access for this deployment.

Step 8: Advanced configuration (optional)

This step allows you to customize your AI Deploy app with additional features. You can choose to configure one, several, or none of the options below.

Commands

You can override the default Docker command (entrypoint) with a custom command. This is useful if your Docker image has a specific entrypoint that you want to modify.

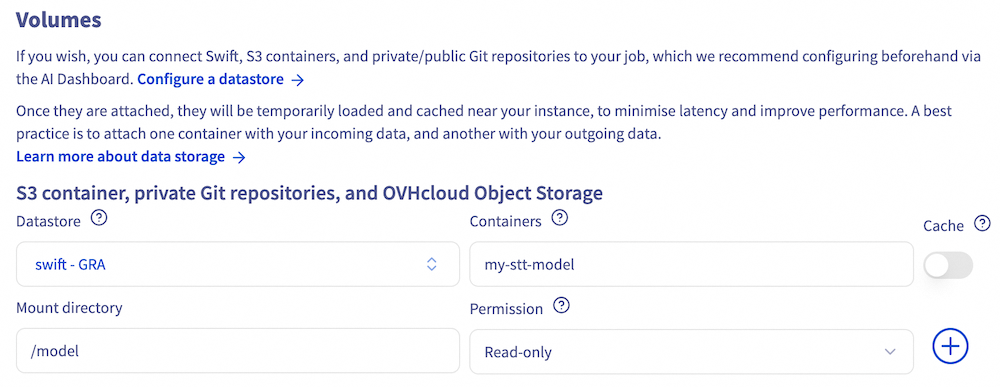

Volumes

If your application requires external data to work, such as scripts or models, you can upload this data to Swift, S3 compatible containers, or private/public Git repositories, and then mount these storage solutions on your app.

You can also mount an Object Storage as an output folder for example, to retrieve the data generated by your application.

You can attach as many volumes as you want to your app with various options.

In both cases, you will have to specify:

Storage containerorGit repository URL: The name of the container to synchronise, or the GitHub repository URL (the one that ends with.git).Mount directory: The location in the app where the synced data is mounted.

There are also optional parameters:

Authorisation: The permission rights on the mounted data. Available rights are Read Only (ro), Read Write (rw). Default value is ro.Cache: Whether the synced data should be added to the project cache. Data in the cache can be used by other apps without additional synchronization. To benefit from the cache, the new apps also need to mount the data with the cache option.

To learn more about data, volumes and permissions, check out the data page.

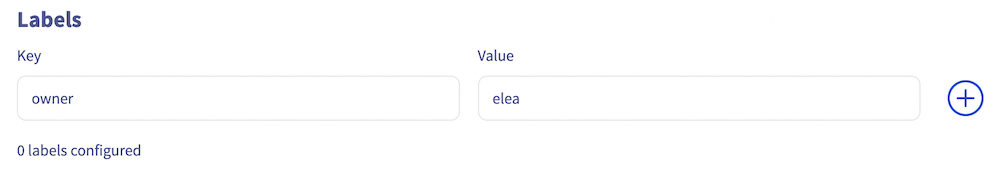

Labels

Then, you have the option to add some Key/Value labels to filter or organize access to your AI Deploy app.

As an example, add a label with Key=owner and Value=elea.

This will make the application accessible only to users who have the token associated with this label (key/value). This will restrict access to users with a token associated with this label (key-value).

Learn more about this feature in the dedicated tutorial.

Availability probe

Finally, you can enable the Readliness probe feature. To do so, provide:

- Probe API endpoint: the /health endpoint of your app.

- Probe port: The port associated with the probe endpoint.

This allows you to monitor the health of your app and ensure it is ready to receive traffic.

Step 9: Review and launch your AI Deploy app

This final step provides a summary of your AI Deploy app deployment, allowing you to review the previously selected options and parameters.

You can also generate the equivalent ovhai CLI command, which enables you to deploy the same application using the command line interface. This CLI can be downloaded here. For more information, consult the CLI - Launch an AI Deploy app documentation.

To launch your AI Deploy app, click on Order now. Please note that your app will not be immediately available, as it requires some time to:

- Pull the Docker image

- Mount any configured data volumes

Once the deployment is complete, your first AI Deploy app will be running on production and ready to be accessed.

Connect to your AI Deploy app

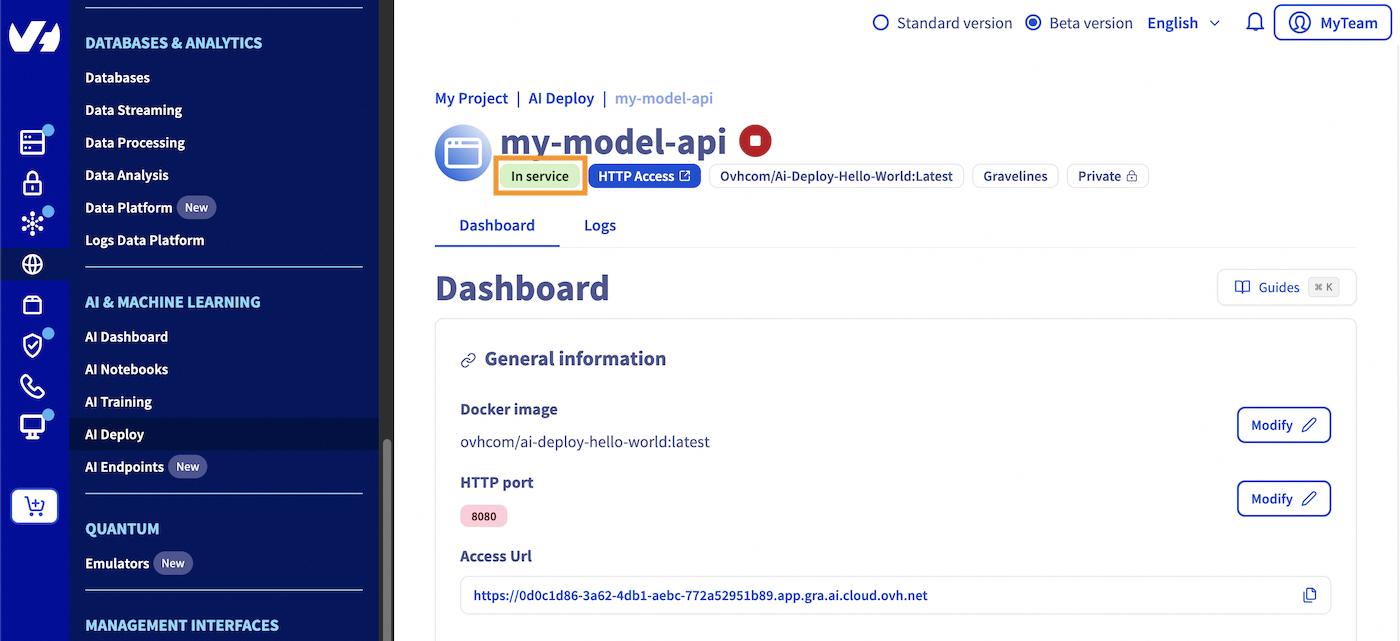

Step 1: Check your AI Deploy app status

First, go check your app details and verify that your AI Deploy app has reached the In service status.

For your information, you can access your deployed application by clicking the HTTP access blue button, which will expose the default HTTP port of your app. However, since we have deployed a Flask API in this tutorial, you won't be able to access it through the HTTP access button as no interface was deployed.

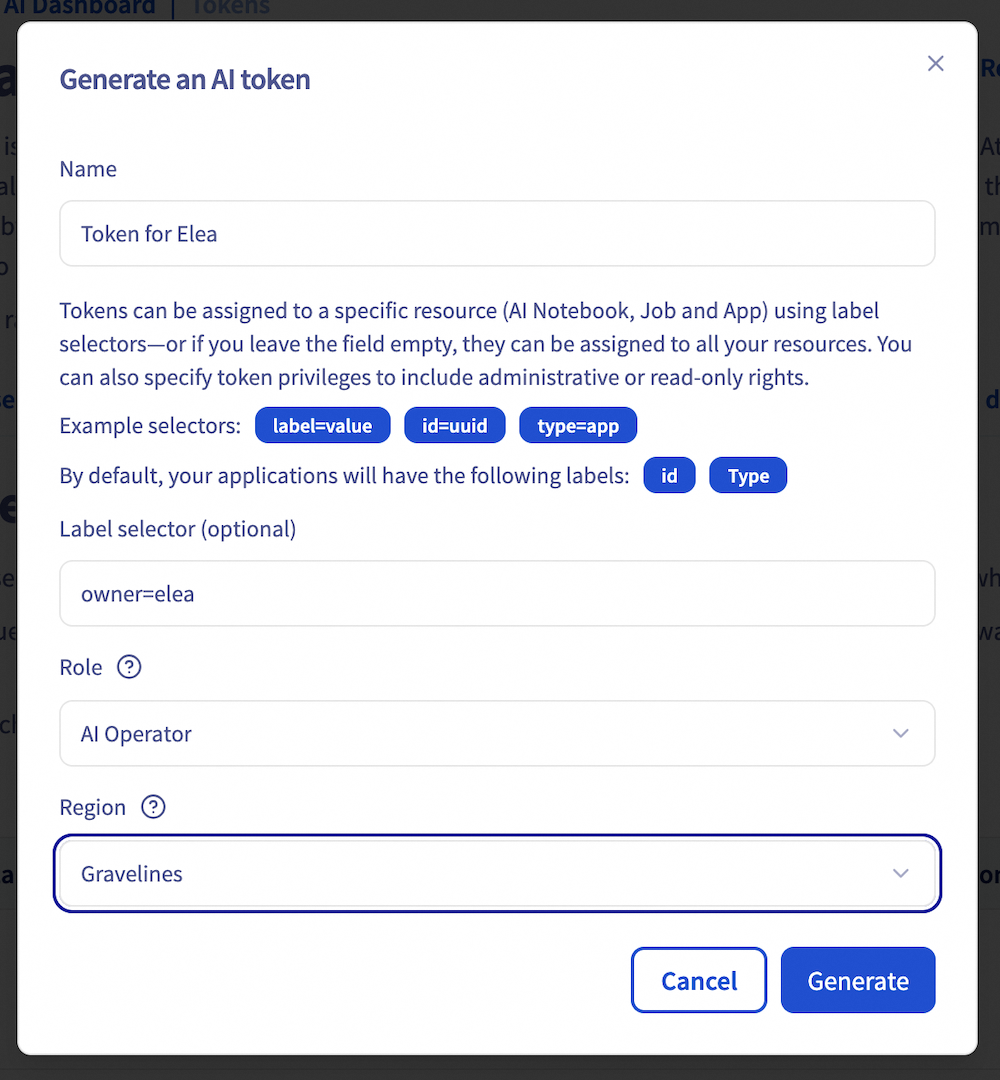

Step 2: Generate a security token

During the AI Deploy apps deployment process, we selected Restricted access. To query your app, you first need a valid security token.

In your OVHcloud Control Panel left menu, go to the AI Dashboard in the AI & Machine Learning section. Select the Tokens tab.

Click on the + Create a token button then fill in a name, a label selector, a role and region as below:

A few explanations:

- Label selector: you can restrict the token granted by labels. You can note a specific id, a type, or any previously created label such as owner=elea in our case.

- Role: AI Platform Operator can read and manage your AI Deploy app. AI Platform Read only can only read your AI Deploy app.

- Region: tokens are regionalized. Select the region related to your AI Deploy app.

Generate your first cURL query

Now that your AI Deploy app is running and token generated, you are ready for your first query.

Since we are on restricted access, you will need to specify the authentication token in the header following this format:

In our case, the exact cURL code is:

Giving us

If you see this message with the name you provided, you have successfully launched your first app!

Generate your first Python query

If you want to query this API with Python, this code sample with Python Request library may suit you:

Result:

That's it!

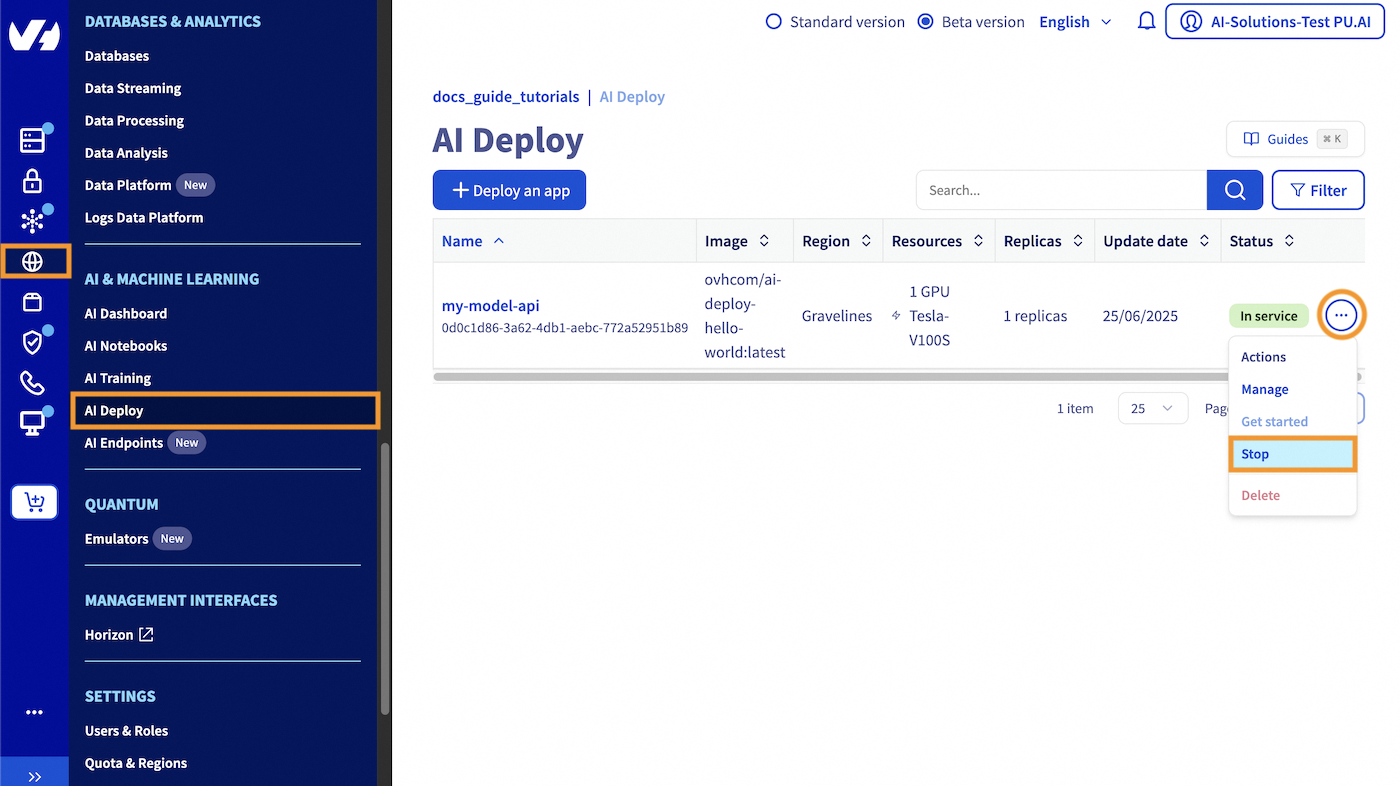

Stop and delete your AI Deploy app

You have the flexibility to keep your AI Deploy app running for an indefinite period. At any time, you can easily stop your application, using either the UI (OVHcloud Control Panel) or the ovhai CLI.

Click this link to access your Public Cloud project, then select the AI Deploy section. Locate the specific AI Deploy app you want to stop. Click the ... button and stop your AI Deploy application by selecting Stop from the context menu.

Once stopped, your AI Deploy app will free up the previously allocated compute resources. Your endpoint is kept and if you restart your AI Deploy app, the same endpoint can be reused seamlessly. Also, when you stop your app, you no longer book compute resources which means you don't have expenses for this part. Only expenses for attached storage may occur.

If you want to completely delete your AI Deploy app, select the Delete action.

Be sure to also delete your Object Storage data if you don't need it anymore, by going in the Object Storage section (in the Storage category).

To follow this part, make sure you have installed the ovhai CLI on your computer or on an instance.

You can easily stop your AI Deploy application using the following command:

Once stopped, your AI Deploy app will free up the previously allocated compute resources. Your endpoint is kept and if you restart your AI Deploy app, the same endpoint can be reused seamlessly.

Also, when you stop your app, you no longer book compute resources which means you don't have expenses for this part. Only expenses for attached storage may occur.

If you want to completely delete your AI Deploy app, just run the following command:

Be sure to also delete your Object Storage data if you don't need it anymore. To do this, you will need to empty it first, then delete it:

Go further

- You can imagine deploying an AI model for sketch recognition thanks to AI Deploy. Refer to this tutorial.

- Do you want to use Streamlit in order to create an app? Here it is.

If you need training or technical assistance to implement our solutions, contact your sales representative or click on this link to get a quote and ask our Professional Services experts for a custom analysis of your project.

Feedback

Please feel free to send us your questions, feedback and suggestions to help our team improve the service on the OVHcloud Discord server!