Using a custom gateway on an OVHcloud Managed Kubernetes cluster

Objectives

In this tutorial we are going to use a custom gateway deployed in vRack with a Managed Kubernetes cluster.

Why?

By default, in a Kubernetes cluster, the Pods you deploy take the Node's output IP. So we have as many output IPs as Nodes. This can be a problem when you are in a situation where you need to manage a whitelist and you have a cluster with AutoScaling (creating and deleting Nodes on the fly).

One solution is to use a custom gateway which will allow you to have a single output IP (your gateway).

You will:

- create a private network

- create subnets

- create an OpenStack router (in every regions) and link them to the external provider network and the subnets

- create an OVHcloud Managed Kubernetes cluster with the private gateway

- test the Pod's output IP

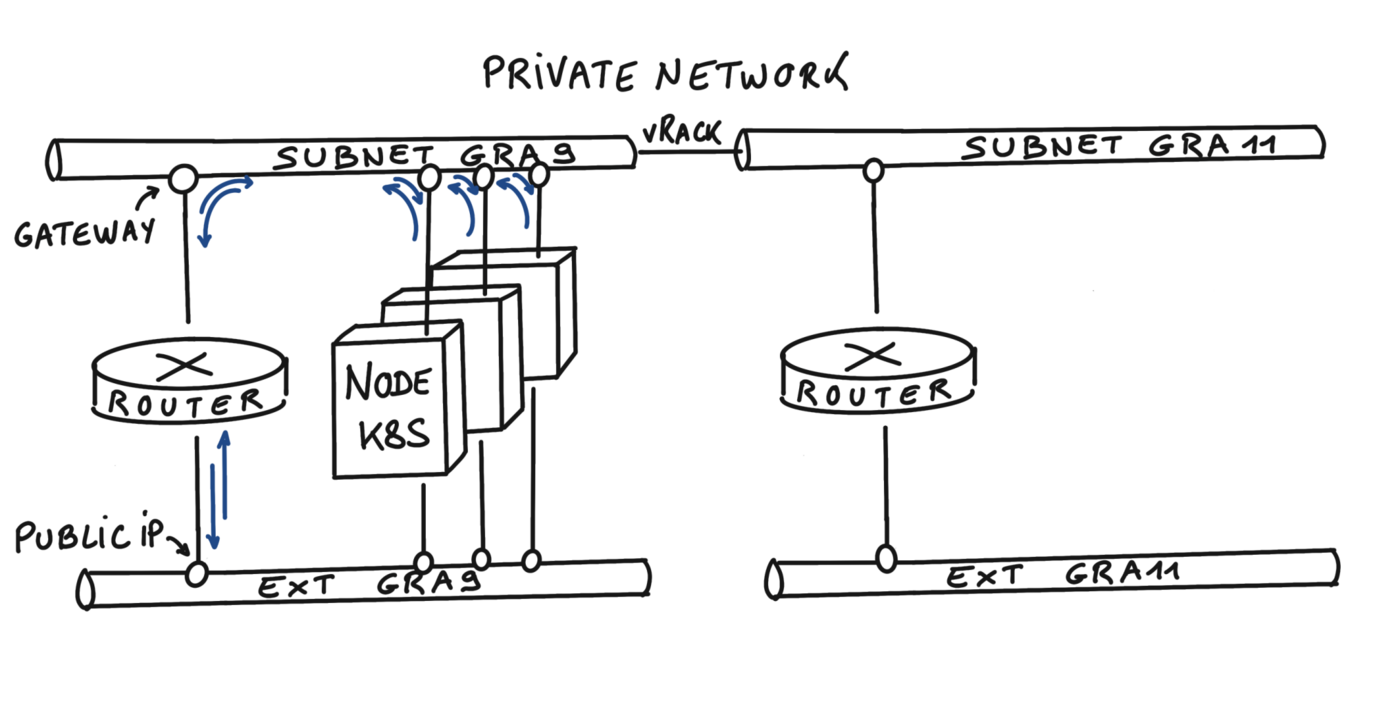

At the end of this tutorial you should have the following flow:

In this tutorial we guide you on how to create the private network in two regions but you can use only one region if you want, GRA9 for example.

Pre-requisites

- A Public Cloud project in your OVHcloud account.

- The OpenStack API CLI installed.

- Being familiar with the OVHcloud API.

- Being familiar with Terraform if you wish to use it.

- The JSON parser tool jq installed.

OVHcloud Control Panel Access

- Direct link: Public Cloud Projects

- Navigation path:

Public Cloud> Select your project

Initialization

To setup a functional environment, you have to load the OpenStack and the OVHcloud API credentials. To help you we also created for you several useful scripts and templates.

First, create a utils folder in your environment/local machine.

Then, download the ovhAPI.sh script into it.

And then add execution rights to the ovhAPI.sh script:

You have to load the content of the given utils/openrc file, to manage OpenStack, and variables contained in the utils/ovhAPI.properties file to manage the OVHcloud API.

Create the utils/openrc, or download it from your Openstack provider. It must be like:

Create the utils/ovhAPI.properties with your generated keys and secret:

You should have a utils folder with three files:

Load variables:

Get your OpenStack Tenant ID and store it into the serviceName variable.

You should have a result like this:

Create Private Network

Important: Assuming that your PCI project is added to your vRack.

We are using the OVHcloud API to create the private network. For this tutorial, we are using the two regions GRA9 and GRA11.

Create a folder tpl next to utils folder and create inside the data-pvnw.json file with the following content:

Create the private network named demo-pvnw in GRA9 and GRA11 regions and get the VLAN ID.

You should have a result like this:

At this point, your private network is created and its ID is pn-1083678_20.

Create subnets

For this tutorial, we are splitting a /24 subnet, to obtain two /25 subnets.

Ref: https://www.davidc.net/sites/default/subnets/subnets.html

| Name | Region | CIDR Address | Gateway | DHCP Range | Broadcast |

|---|---|---|---|---|---|

| Subnet 1 | GRA9 | 192.168.0.0/25 | 192.168.0.1 | 192.168.0.2-192.168.0.126 | 192.168.0.127 |

| Subnet 2 | GRA11 | 192.168.0.128/25 | 192.168.0.129 | 192.168.0.130-192.168.0.254 | 192.168.0.255 |

Create these two data files in the tpl folder:

data-subnetGRA9.json file:

data-subnetGRA11.json file:

Note: To be clear, the parameter

"noGateway": falsemeans"Gateway": true. We want the subnet to explicitly use the first IP address of the CIDR range.

Then create subnets with appropriate routes, and finally get IDs (subnGRA9 & subnGRA11):

You should have a result like this:

For now, it's not possible to add routes to the subnet via the API, so we must use the OpenStack CLI instead.

OpenStack router

Create the routers

We have the ability to create OpenStack virtual routers. To do this, we need to use the OpenStack CLI. Create routers and get their IDs (rtrGRA9Id & rtrGRA11Id):

You should have a result like this:

For the moment you can only create a virtual router in the GRA9 and GRA11 regions, but this feature will be released in other regions in the coming weeks and months.

Now, you can display the information of your new virtual router on GRA9 in order to display its IP:

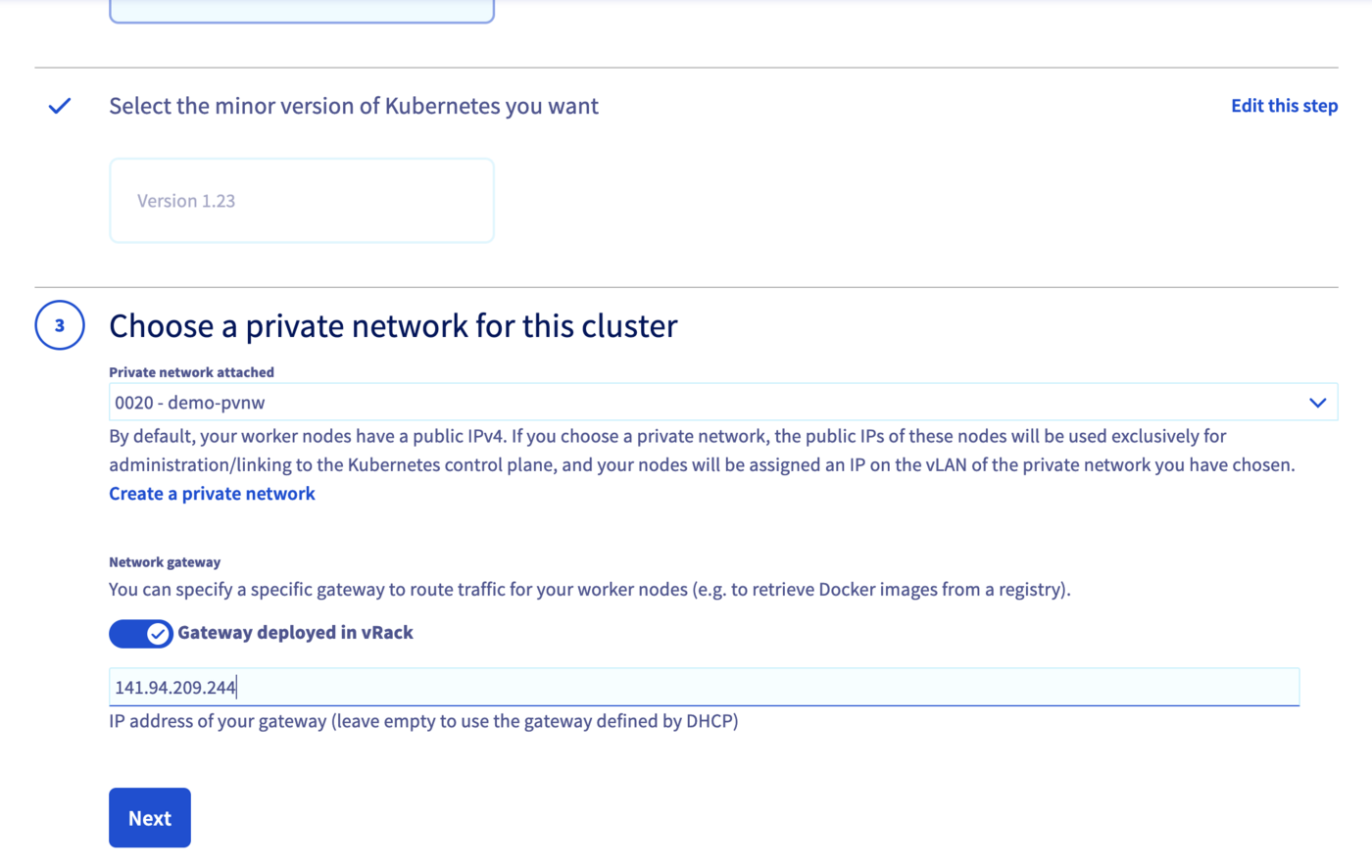

As you can see, in this example, the IP of the gateway will be 141.94.209.244.

Link the router to the external provider network

First, get the regional external network ID (extNwGRA9Id & extNwGRA11Id), then link the router to it:

You should have a result like this:

Link the router to the subnet

Do the same with the regional subnets:

Create a Kubernetes cluster with private gateway

Now the network is ready. Create an OVHcloud Managed Kubernetes cluster, specifying the use of the gateway defined on each subnet.

Note: until the end of this tutorial, we are only using the

GRA9region, but you can repeat the exact same steps to create a cluster on theGRA11region.

In this guide we defined 1.34 version for the Kubernetes cluster but you can use another supported version.

First, get the private network IDs (pvnwGRA9Id & pvnwGRA11Id), then create the OVHcloud Managed Kubernetes Cluster, and finally get the cluster ID (kubeId):

Create a tpl/data-kube.json.tpl file as data and add the right parameters. The files should be like:

You should have a result like this:

Access the administration UI for your OVHcloud Managed Kubernetes clusters by clicking on Managed Kubernetes Service in the left-hand menu:

You can create your networks and subnets using Terraform by following this guide.

You need to create a file, let's name it kubernetes-cluster-test.tf with this content:

You can create your resources by entering the following command:

Now wait until your OVHcloud Managed Kubernetes cluster is READY.

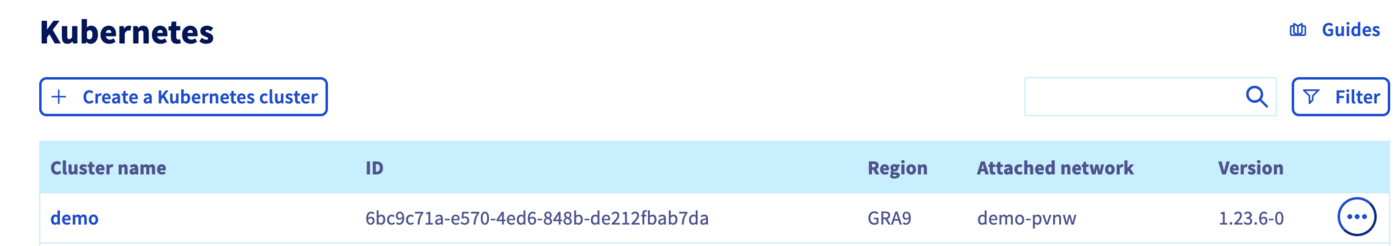

For that, you can check its status in the OVHcloud Control Panel:

Access the administration UI for your OVHcloud Managed Kubernetes clusters by clicking on Managed Kubernetes Service in the left-hand menu:

As you can see, your new cluster is attached to demo-pvnw network.

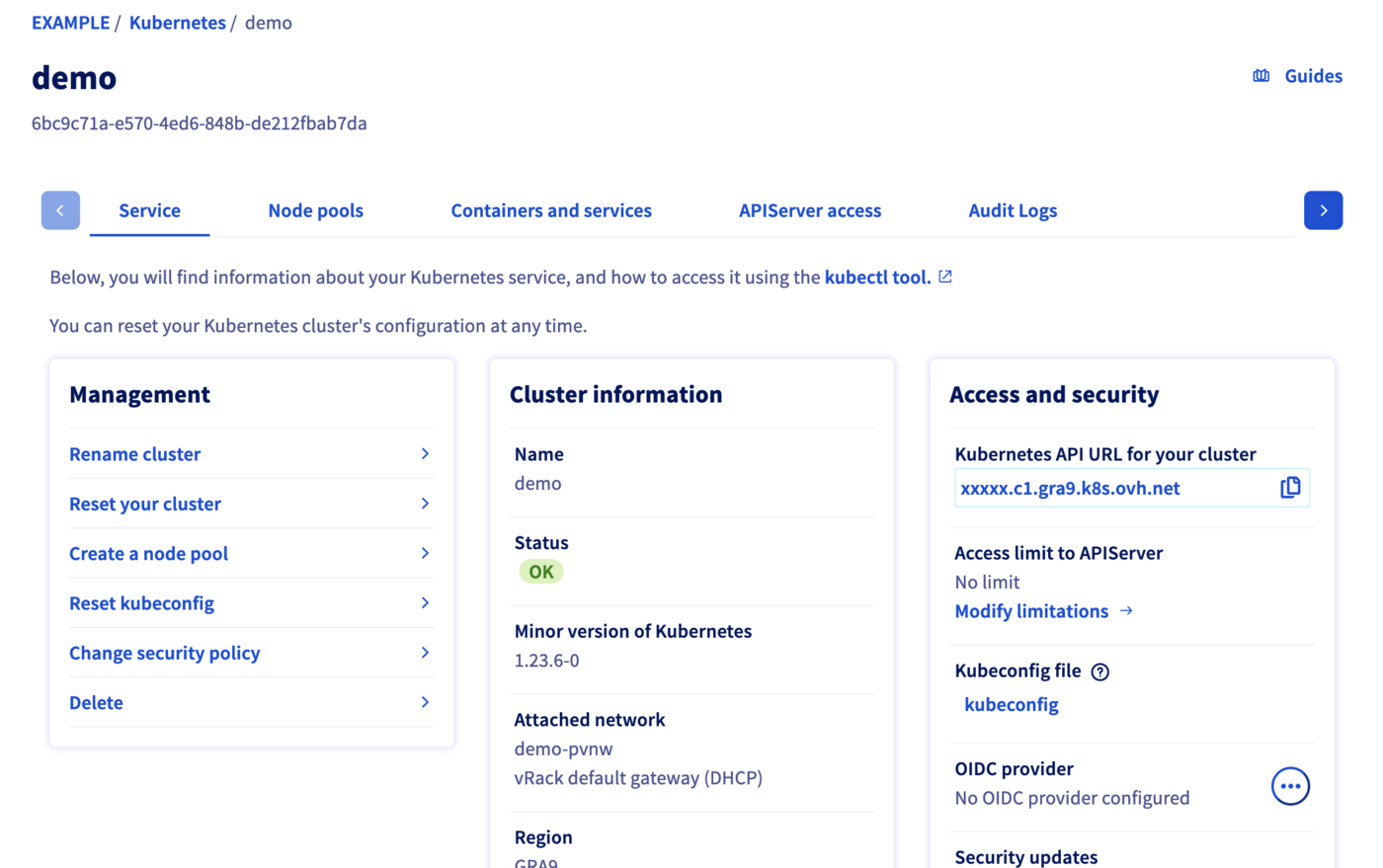

And now click in your demo created Kubernetes cluster in order to see its status:

When your cluster's status is OK, you can go to the next section.

Get Kubeconfig file

To proceed with the freshly created Kubernetes cluster, you must get the Kubeconfig file.

To use this kubeconfig file and access to your cluster, you can follow our configuring kubectl tutorial, or simply add the --kubeconfig flag in your kubectl commands.

Test

List the running nodes in your cluster:

You should obtain a result like this:

Now test the cluster by running a simple container that requests its published IP address.

You should obtain a result like this:

The IP address of our Pod is indeed that of our gateway!

Cleanup

To delete created resources, please follow these instructions:

Kubernetes cluster

Routers

To delete an Openstack router, you must first remove the linked ports.

Subnets

Private Network

Go further

- If you need training or technical assistance to implement our solutions, contact your sales representative or click on this link to get a quote and ask our Professional Services experts for assisting you on your specific use case of your project.

Join our community of users.